Service Orchestration Framework

- Category: Service Framework

- User: HERE Techologies

- Project year: 2022

- Technologies: Java, Scala, AWS S3, AWS EC2, Grafana, Splunk, Apache Zookeeper PostgreSQL.

What is Service Orchestration Framework ?

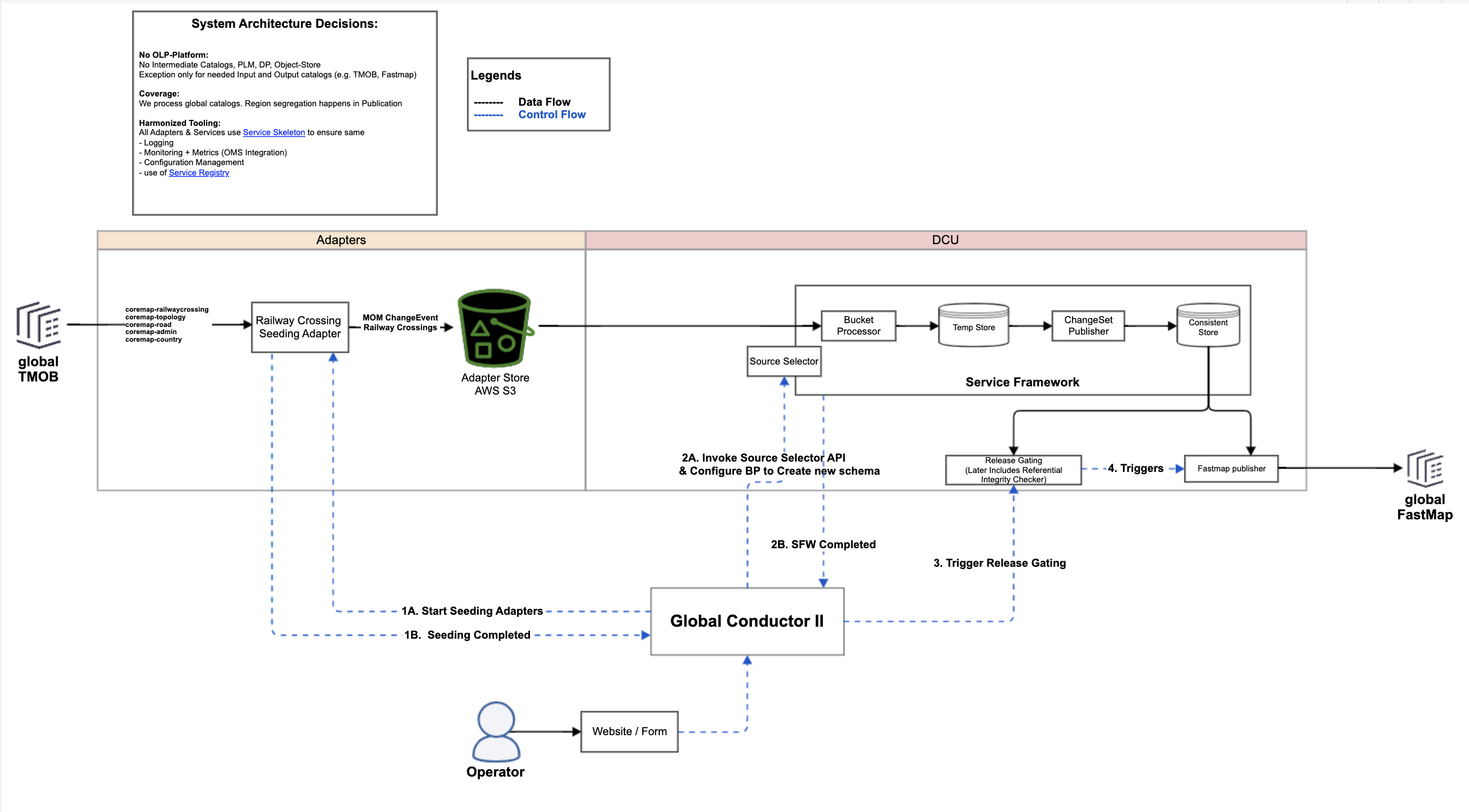

It is an orchestration framework developed to be able to produce always-consistent map paying

the price of freshness is some areas (where moderations are needed)

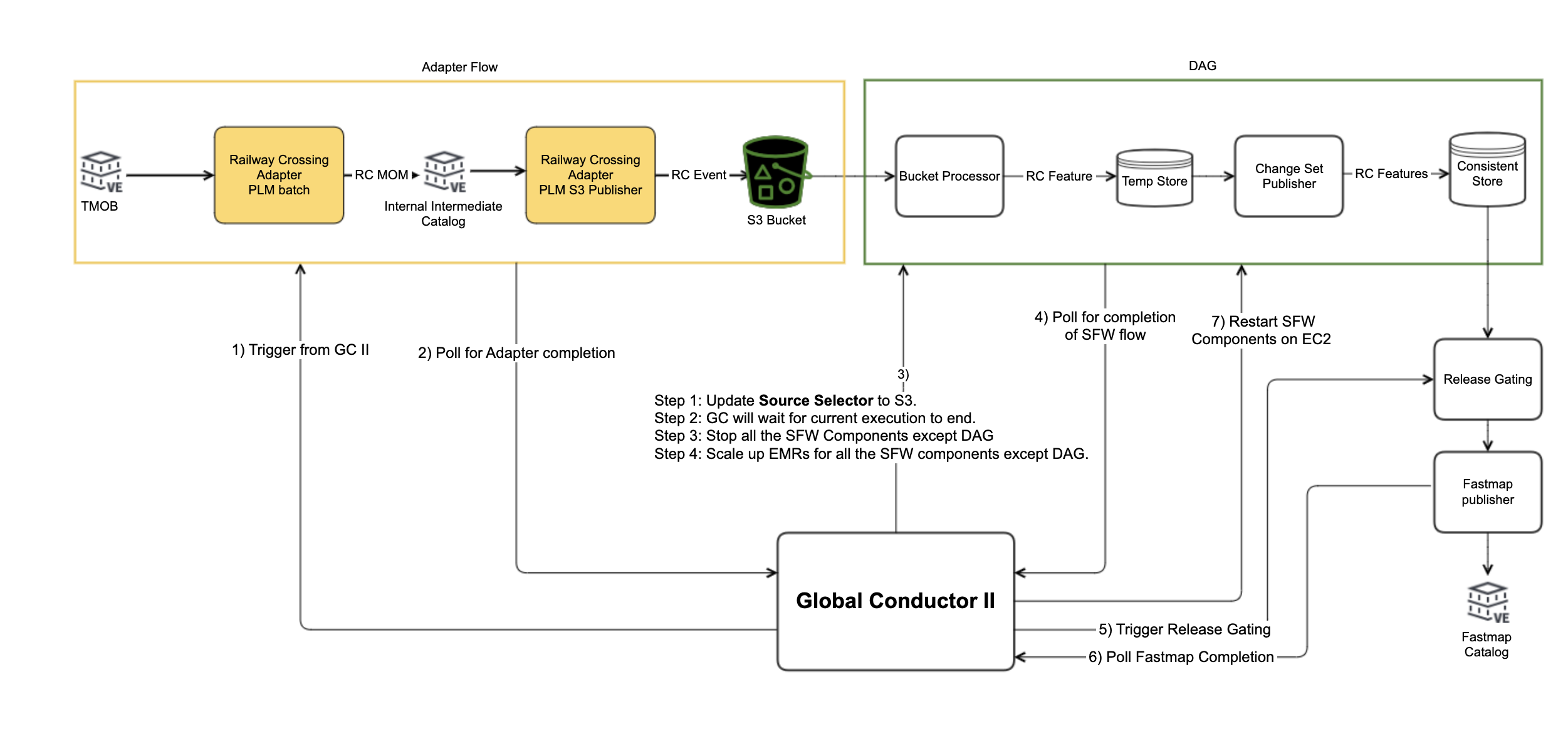

In the main flow of the system, events are received asynchronously and grouped into discrete

buckets based on their arrival time. Support for late events, similar to the Snapshot

service, is provided. Depending on the external schedule, the Batch Processor selects a

source bucket and sends it to the Orchestration Framework.

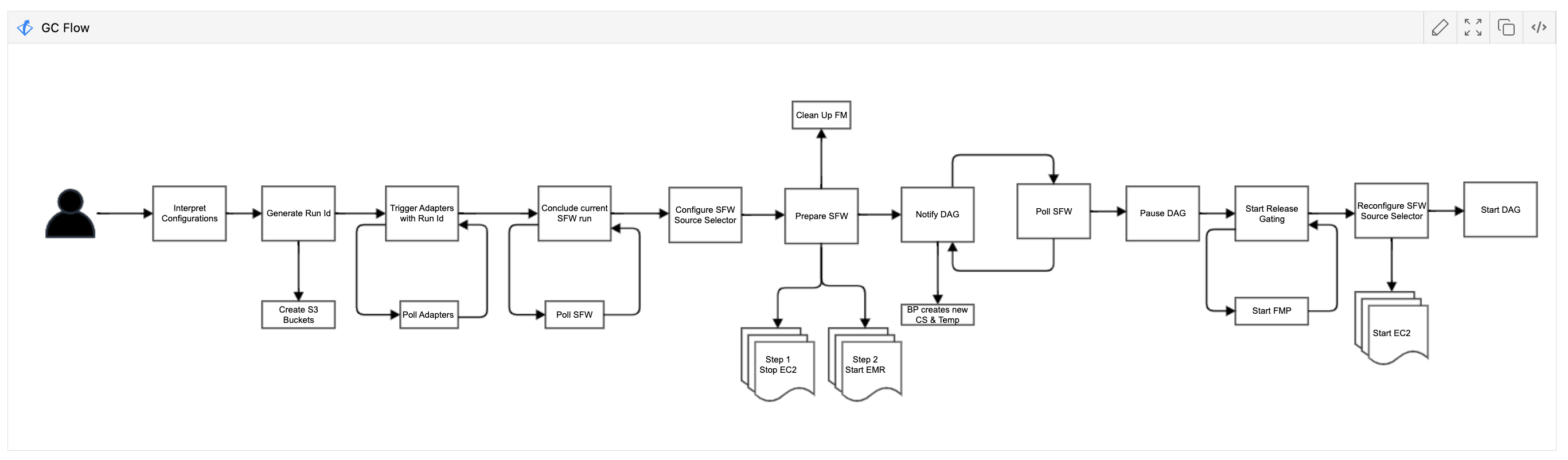

Within the Orchestration Framework, the following steps are performed:

The Event Partitioner copies the input events from the bucket into temporary storage.

Partitioning of the events is optional and depends on the chosen storage method.

The Execution DAG Builder sequentially calls the Feature Service API of each feature

service. The order of the calls is determined by the services descriptors.

Upon receiving a call, each feature service processes the data in the Temporary Store using

the most efficient method. The feature service can load data from both the Temporary Store

and the Consistent Store. It also has the option to submit the processing to a Big Data

processing technology stack such as Spark, Flink, or AWS Lambda.

The feature service writes back the processed data into the temporary storage.

Once the DAG execution is complete, the Change Set Publisher retrieves the changes from the

Temporary Store. It verifies the changes and decides whether to send them to the Dead Letter

Bucket or apply them to the Consistent Store.